The Duolingo English Test is a new breed of assessment, a digital-first high-stakes exam that is available on demand, 24/7 anywhere in the world. But this continuous, global test administration poses enormous quality assurance challenges, as every test must be monitored to ensure score validity. Learn how we combine human expertise and artificial intelligence to ensure the quality of a new generation of assessments.

The contest and the measurement

High-stakes exams impact people’s lives, so it’s crucial they meet what assessment scientists call “the contest and the measurement” standards of a test — that is, they must give everyone a fair opportunity to prove their ability in a certain area, and measure that ability accurately (Holland, 1994).

One way to ensure this is to monitor test scores over many administrations of the test;if scores are comparable, this helps test developers to determine that the test is valid. To help test developers identify and prevent possible errors that might jeopardize test score validity, the International Test Commission has laid out quality assurance guidelines: step-by-step procedures for regulating and monitoring high-stakes assessments.

The challenge is, the Duolingo English Test isn’t your average test. Unlike traditional brick-and-mortar operations, where a single form of the exam is administered to people gathered together on a set day and time, the Duolingo English Test is administered continuously, on demand, anytime and anywhere in the world. The old guidelines simply weren’t designed to accommodate the complexity of this new system.

“We can’t just conduct quality assurance for a few days during and after a test and be done,” says Mancy Liao, a scientist on the Assessment Research team. “We need to monitor everything, everywhere, all the time—it’s a huge undertaking.”

AQuAA: A fluid system

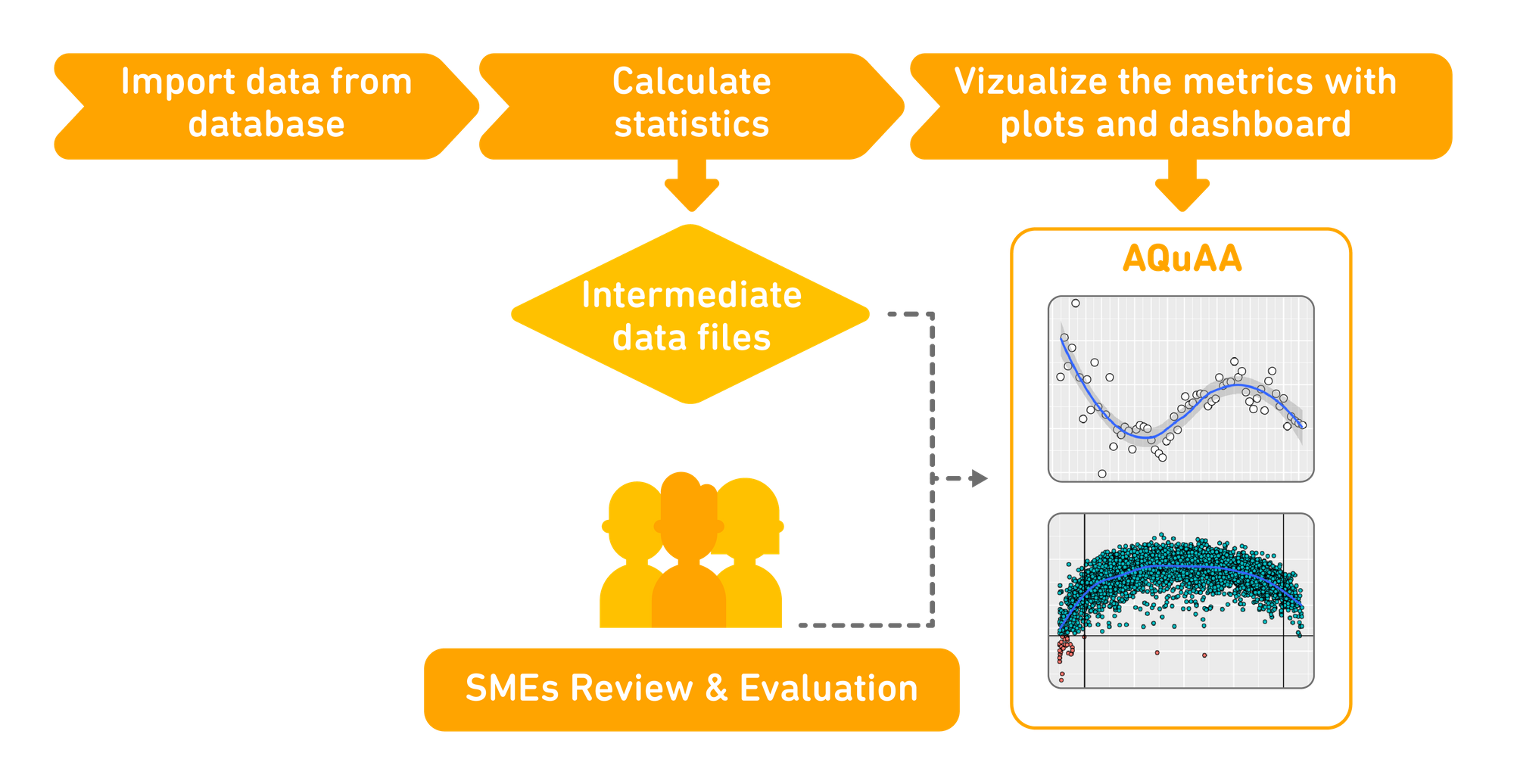

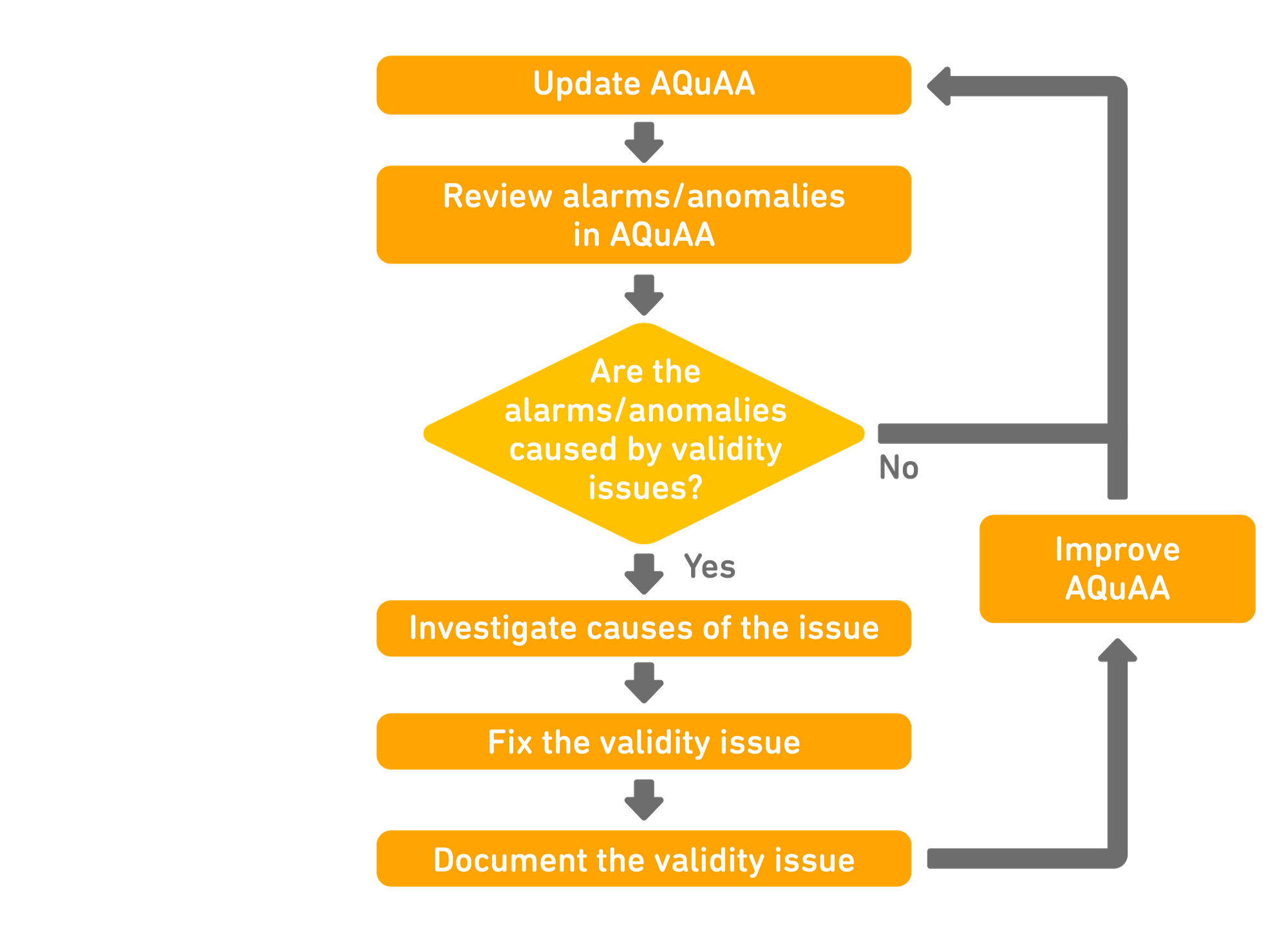

Here, tools that facilitate continuous pattern monitoring and swift communication are paramount. Enter AQuAA: Analytics for Quality Assurance in Assessment, an interactive dashboard that blends educational data mining techniques and psychometric theory.

The AQuAA dashboard allows our psychometricians to evaluate the interaction between the test items, the adaptive test, and the samples of test takers, to ensure scores are consistent over many test administrations. First, a wide range of data mining psychometrics and visualization techniques are used to gather assessment data and describe trends and seasonal patterns of test scores, in order to detect atypical changes. The dashboard then communicates this information to our team of assessment scientists and psychometricians, helping them to discover issues and respond in a timely manner.

Humans in the Loop

The Duolingo English Test is the first of its kind; creating a unique quality assurance system to match required a tremendous amount of ingenuity. From determining which statistics should be used as indicators of score validity, to identifying patterns and irregularities relevant to the test’s quality, our experts had to research, test, and deliberate every step.

Our assessment scientists designed AQuAA to accommodate the features of the Duolingo English Test that make it maximally accessible. Because many key aspects of this digital-first exam are accomplished automatically, quality assurance requires far more extensive data mining techniques than traditional tests. AQuAA leverages computational psychometrics to mine and model the educational data needed to run the system, while also allowing our experts to conduct pattern monitoring and reviews continuously, to keep up with our round-the-clock administration.

Designed to adapt

Beyond facilitating quality assurance, AQuAA helps our test developers continually improve the exam. Insights drawn from AQuAA are used to direct the maintenance and improvement of other aspects of the assessment, such as item development. The system was built to be flexible, so that it can adapt and evolve along with the Duolingo English Test. It's so flexible, in fact, it could even be adapted to ensure the quality of other digital-first assessments!

To learn more about AQuAA, check out this paper by Senior Assessment Scientist Dr. Mancy Liao, published in the Proceedings of the 14th International Conference on Educational Data Mining.